Sonify is on GitHub

Nov 3, 2010

by Mark

I made a couple of revisions to Sonify over the last week. Most significantly I replaced GD Library with SDL. Now Sonify can draw decoded audio into a window and resize its output using nearest-neighbor scaling — preserve those pixels!

Note: The screenshot above also shows JackPilot — part of the Jack OS X project.

Sonify uses Pascal Getreuer's colorspace for converting between HSL and RGB color models. Puzzlingly, tests I've run show that the conversions I am doing are a bit more than imprecise. Compare the image above to the two below: the bottom left graphic is the source image; the screenshot above shows the image reconstructed using sine waves in the frequency range of 100-1100 hz; and the bottom right screenshot shows the image reconstructed using square waves over the same frequency range. The two instances of Sonify were launched using sonify sfy color.png 1000 100 10 sin 2 and sonify sfy color.png 1000 100 10 sq 2.

I am guessing the apparent phase-shift error either comes from imprecision in the data types of my variables or propogates through the colorspace conversions; however, I have yet to examine this.

Instead I spent time over the past couple of days scrubbing and commenting Sonify's source files. The code I posted below (including the manner of writing to disk, unused variables, and crap I wrote at 4:00 AM) is just horrible, really. But now anyone can peak into the project, get a clear sense of what's going on, and start hacking — which I hope people do — because...

Sonify is on GitHub! https://github.com/markandrus/Sonify

Killer! I was working on a Haskell program for a lab two weeks ago when I accidentally rm-ed my source code — some form of version control would have been helpful! Now I plan to use git with my future projects and push as many as are worthwhile to GitHub.

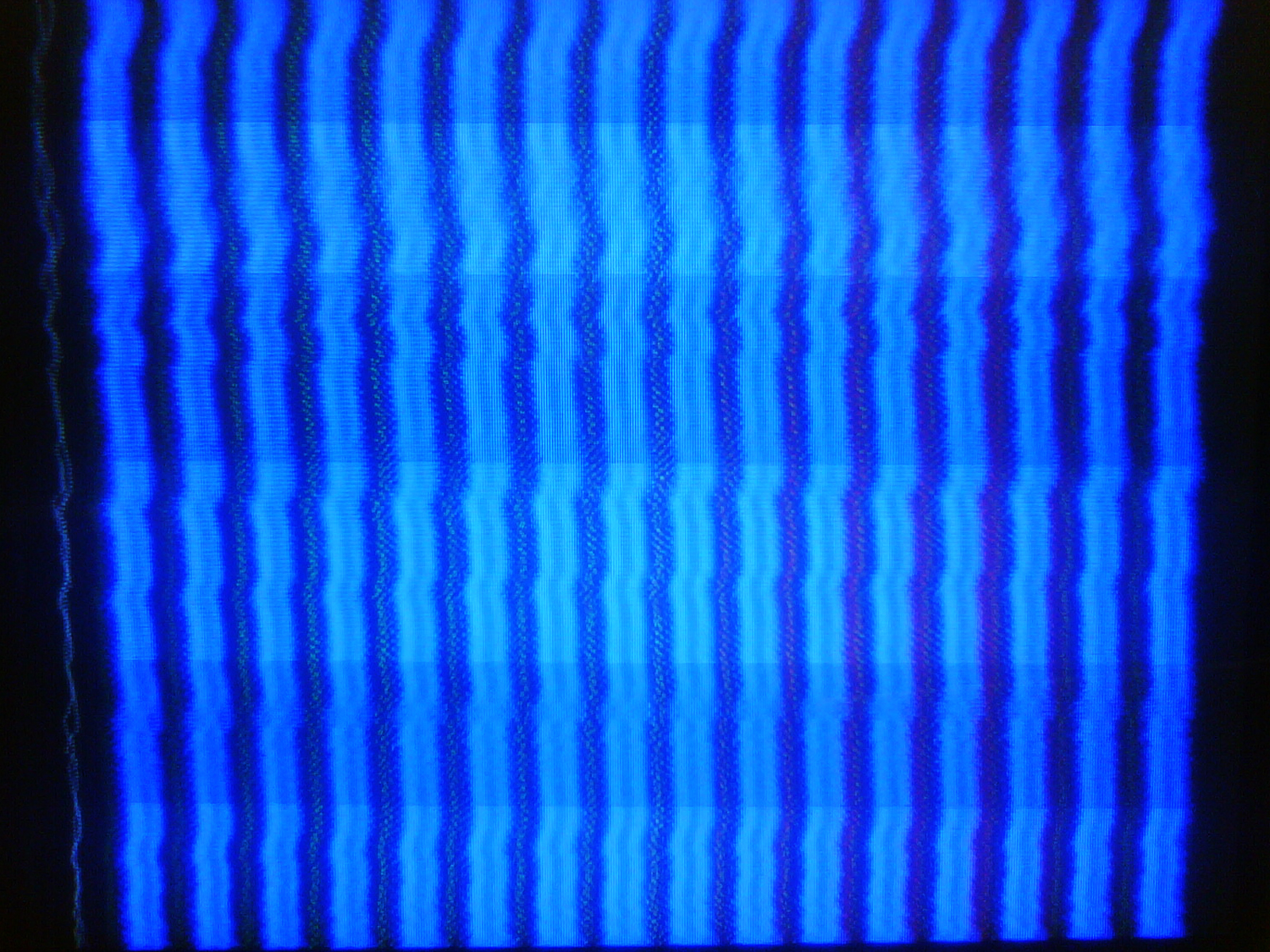

I'll leave you with some glitchy images I made while feeding Sonify a wavering synthesizer signal from Ableton Live:

It's really fun to see what kind of patterns emerge out of this system!